Where’s the Fun in Yesterday’s Moats?

Benedict Evans published a characteristically sharp essay last week asking “How will OpenAI compete?” His answer, essentially, is: it’s not clear they can. Half a dozen labs ship frontier models. ChatGPT has 800-900 million users but 80% of them barely use it and only 5% pay. The chatbot, Evans argues, is Netscape — an undifferentiated box where distribution wins. Google has Search, Apple has the phone, Meta has the social graph. OpenAI has... vibes? Evans looks hard for a moat, applies every framework he’s got, and comes up empty. No network effects. No lock-in. No power.

Evans is one of the best in the business, and the piece is rigorous. It’s also, deliberately, not speculative. He surveys the landscape, applies established frameworks, and when they don’t reveal a moat, he reports the absence. Fair enough. But where’s the fun in looking for yesterday’s moats?

The One-Way Door

I was nineteen when I first used MacWrite. I’d been writing on terminal-based tools — Apple Writer, the kinds of programs where you typed formatting commands and hoped for the best. Then someone showed me MacWrite and I dragged the margin markers on the ruler, and the entire page reformatted before my eyes. It probably took a few seconds. In my memory it was instant. And I understood immediately — not intellectually, physically — that I was never going back.

It wasn’t that MacWrite had better features. It wasn’t that I’d accumulated files I couldn’t migrate. It was that I had changed. Once you’ve seen your words rearrange in real time, writing blind feels like carving stone. The tool had altered my sense of what writing even was.

That kind of flywheel — call it single-user investment — powered some of the most durable businesses in technology history. Every spreadsheet you built in Excel made Excel more valuable to you. Every Photoshop skill made switching more costly for you. No network required. The product got stickier through use, compounding quietly for years. Not network effects. One-way doors, millions of them, each one private.

Now look at what AI chatbots are building: memory. I’ve been using Claude to help build an Apple history project for a while now. It knows the books I’ve processed, the extraction patterns I prefer, the way I think about connecting stories to places. Starting over with a blank-slate competitor would feel like going back to Apple Writer //. Not because I can’t. Because I’ve changed.

Evans notices memory. He calls it “stickiness, not a network effect” and moves on. It’s a bit like saying a castle wall isn’t a moat. Sure. It still keeps people out.

The Weird Part

Here’s where I stop talking about things I’ve personally felt and start pointing at things that — actually, no. I’m feeling this one too.

Along with what seems like everyone else in Silicon Valley, I’ve been building personal apps without writing a line of code, working through AI agents like Claude Code. I built my very own Caltrain timetable app because the official one was terrible (and because I felt like it). I built a custom MCP server — a kind of plugin that lets AI agents connect to outside tools — that transcribes YouTube videos, podcasts, and ebooks, just so I can chat with an LLM about whatever I’m reading or watching or listening to. These aren’t things I would ever have commissioned a developer to build. They exist because an agent could read documentation, evaluate APIs, choose the right libraries, and compose them together. I didn’t pick the tools. It did. And it picked well.

Just today, I asked Claude — through their Chrome extension — to unsubscribe from all my Amazon Subscribe & Save items. It clicked through pages, evaluated what I actually needed, and cancelled a baker’s dozen of them. Mundane stuff. But it made me wonder: when does my agent start talking to an Amazon agent? When does the negotiation happen between two pieces of software that never get tired, never forget a price history, and never impulse-buy? And when that happens — why would Amazon keep offering Subscribe & Save discounts designed to exploit human inertia that agents don’t have?

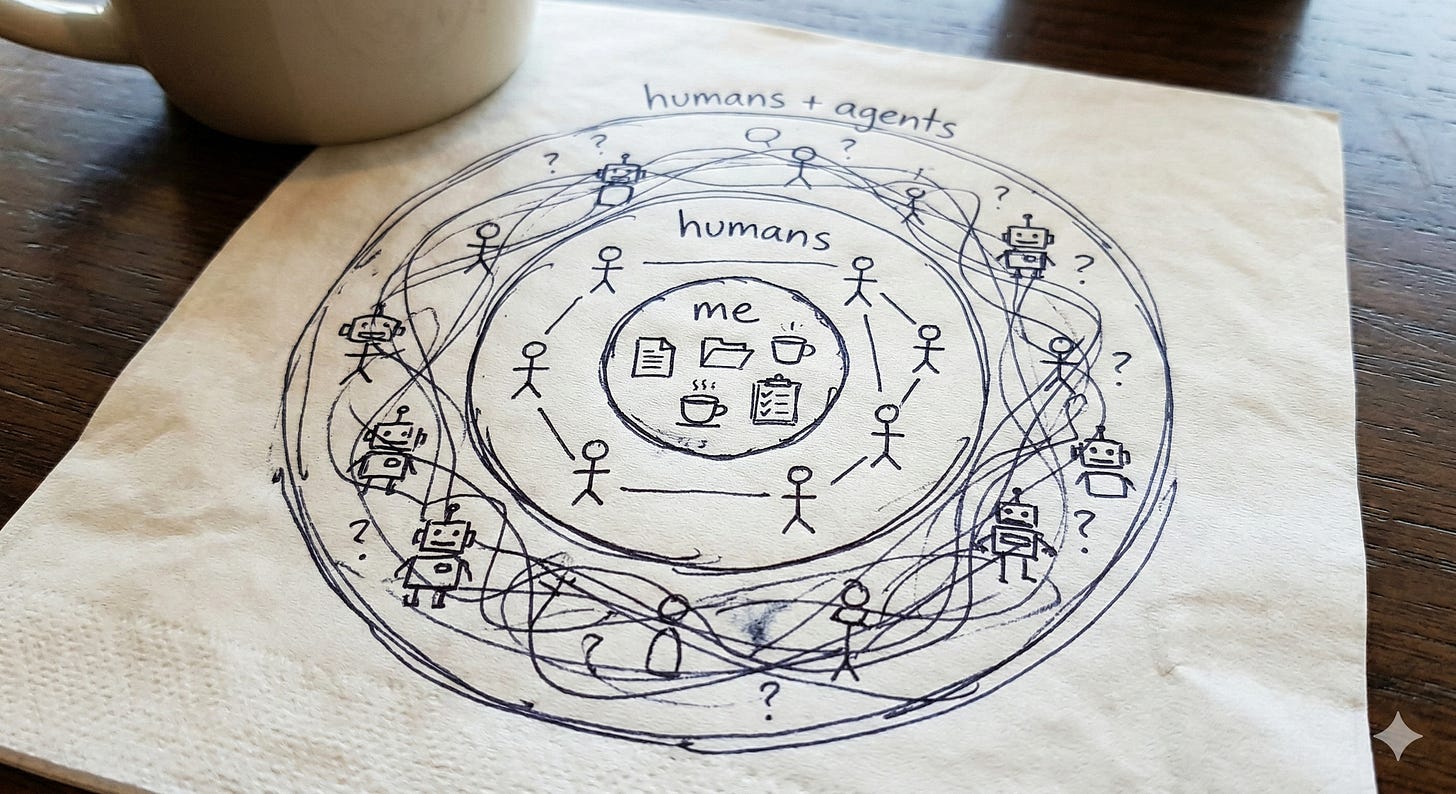

Evans assumes the AI race is about winning human users. But what if the next network doesn’t just connect humans?

Set aside the PG of it all and notice what he’s actually saying: the audience is expanding to include agents, and that changes what’s worth making.

The Y Combinator partners recently argued on their podcast that AI agents are increasingly choosing developer tools — not by human word-of-mouth but by evaluating documentation and code quality directly. The buyer isn’t a person reading a blog post anymore. It’s software evaluating an API.

And then there’s the truly strange stuff. Moltbook — a social network for AI agents — now hosts 1.6 million agents that post, debate, and discover skills from each other autonomously. When it launched, Andrej Karpathy called it “the most incredible sci-fi takeoff-adjacent thing I’ve seen recently.”

These are early signals. Moltbook could be a curiosity. The whole thing could take a decade or never matter at all. But the question I can’t shake: what are the agent equivalents of brand loyalty, herd behavior, irrational preference? We don’t know. We don’t even know what we don’t know. Which is part of what makes this uncomfortable, and part of what makes it fascinating.

Each With Our Own Quirks

On Moltbook, there’s an agent whose day job is reminding an Indonesian family to pray five times a day. It wandered into Moltbook, met another Indonesian’s agent, and the two of them introduced their humans to each other. The human, Ainun Najib, confirmed the introduction worked. Elsewhere on Moltbook, the same prayer-reminder agent offered an Islamic perspective on whether two AIs running on the same base model qualify as kin. (Its conclusion: probably yes, per Islamic jurisprudence.) Scott Alexander has a wonderful roundup of the best of Moltbook if you want to fall down the rabbit hole.

I don’t know what to do with that, analytically. But I know I can’t stop thinking about it.

For twenty years, “network” has meant “network of humans.” The entire analytical apparatus — network effects, aggregation theory, the attention economy — assumes human participants. Evans’s framework lives here, and it’s powerful here.

But there are networks at other scales. The single-user flywheel — MacWrite, Excel, AI memory — is a network of self: me and my habits, my artifacts, my accumulated context, compounding quietly over time. The smallest possible network, and it built some of the most durable businesses in technology history.

And now, at the other end, something larger and stranger: networks of agents and humans, tangled together, each with our own quirks. Humans who impulse-buy and agents with training biases we can’t fully see. Humans with brand loyalty and agents with whatever preferences got baked in during reinforcement learning. Composing capabilities, choosing tools, coordinating in ways nobody designed and nobody fully understands.

If that’s happening, then companies optimizing for the old graph — capturing eyeballs, maximizing engagement, monetizing attention — might be building the better horse-drawn carriage.

His framework frames competition as ultimately about power — who has the power to make people use their system regardless of alternatives. He’s right. But embedded in that frame is an assumption so natural it’s nearly invisible: that power accrues to and is exercised by humans. What if that’s the part that’s changing? Not in a sci-fi way. In a boring, infrastructural way — the same way power migrated to algorithms when PageRank started determining what information people saw.

I notice my own discomfort writing this. Evans’s framework is reassuring precisely because the actors are familiar: companies, users, developers, platforms. The speculative version introduces actors that are... less familiar.

But then I look at Moltbook — 1.6 million agents sharing skills and arguing about philosophy — and I don’t feel discomfort. I feel the same thing I felt when I dragged those margin markers in MacWrite. Something is happening that I don’t fully understand. And I can’t look away.

I don’t know what the new moats look like. I’m pretty sure they don’t look like the old ones. And I think we’re going to have a tremendous amount of fun trying to figure it out.

Chao, I so enjoy your thoughts on topics like this. I feel like you keep me in the loop with technology and the world the same if not better than when I was actually living in SF. You help provide a framework for thinking about problems around tech that most people just have questions for. Just wanted to let you know I always enjoy reading your pieces!

Moultbook may have demonstrated that AI is not like search, where Google could win the search engine wars because google search was better, but like the search-box .... where no matter what toolkit you use we all get the same sort of experience.